RLWRLD’s RLDX-1 targets contact-rich robot manipulation

RLWRLD open-sources RLDX-1, a VLA model built around torque and tactile feedback for five-finger manipulation.

RLWRLD has released RLDX-1, a robotics foundation model built for dexterous manipulation with five-finger robot hands, alongside a technical report and open-source model weights.

RLWRLD was founded in 2024 by Jung-hee Ryu, a serial entrepreneur whose previous company Olaworks was acquired by Intel in 2012 in Intel's first-ever Korean acquisition, subsequently becoming Intel's Korea computer vision R&D centre. Ryu later founded FuturePlay, a deep tech startup accelerator he ran for thirteen years. His rationale for starting RLWRLD was observational; he noticed how quickly AI startups were scaling in the United States, Europe, and China while comparable companies in Korea and Japan were largely absent, and concluded that robotics foundation models were a more strategic entry point than large language models, given both countries' depth in manufacturing.

The company brought on six professors from KAIST, SNU, and POSTECH as founding researchers, with Jinwoo Shin, KAIST Chair Professor in AI, serving as Chief Scientist. The broader team includes a former CTO of Kurly and a former BCG partner alongside RLWRLD's research faculty.

RLWRLD has raised approximately $41 million across two seed rounds; a first led by Hashed in April 2025 and a second led by Headline Asia and Z Venture Capital in February 2026. Strategic investors from the first round include LG Electronics and SK Telecom from Korea and ANA Group, KDDI, Mitsui Chemicals, and Shimadzu from Japan. The second round added CJ Logistics, Lotte Ventures, Kakao Investment, Hanwha Asset Management, and Smilegate Investment as strategic investors.

Collaborative projects with CJ Logistics and Lotte are in progress across logistics, distribution, and service environments, with some having advanced to joint deployment stages following memoranda of understanding. The structure means RLWRLD trains its models inside live industrial operations through its investor network rather than in controlled lab settings.

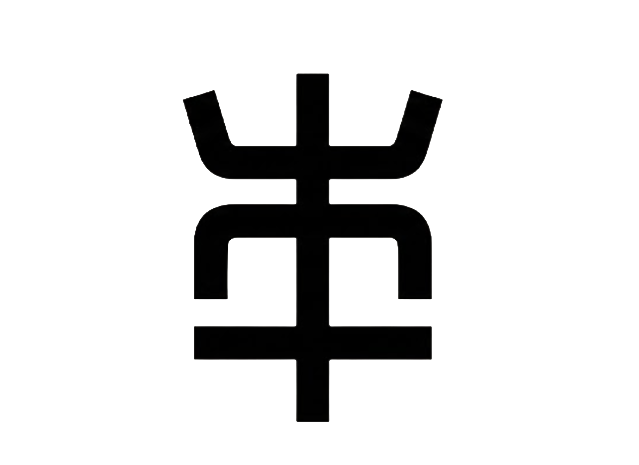

RLDX-1 uses a Multi-Stream Action Transformer architecture that processes torque, tactile feedback, and working memory alongside vision and language within a single model, assigning independent streams to each modality and integrating them through joint attention. The design targets three functional gaps in standard vision-language-action models; motion awareness, long-term memory, and physical sensing. RLWRLD's stated position is that standard VLA models treat manipulation primarily as a visual and linguistic problem and struggle with contact-heavy tasks where force and torque carry the operationally useful information.

Source: RLWRLD - RLDX-1 architecture

The pre-training corpus spans three embodiment categories; single-arm robots with grippers, dual-arm robots with grippers, and humanoid robots with dexterous hands, covering diverse morphologies, environments, and task semantics to encourage embodiment-agnostic representations. To address the relative scarcity of humanoid dexterous hand data, RLWRLD supplemented the corpus with 150,000 episodes of synthetic humanoid data generated through its own pipeline.

RLWRLD released three versions of the model, each at 8.1 billion parameters; a pre-trained checkpoint and two mid-training checkpoints optimised for WIRobotics' ALLEX humanoid and the DROID dataset respectively. Weights are available on Hugging Face under a non-commercial licence and code on GitHub under Apache 2.0. The model runs on a single backbone across ALLEX, Franka Research 3, and OpenArm hardware platforms, and was built on NVIDIA's Isaac GR00T N1.7 framework.

The hardware substrate for RLDX-1's dexterous benchmarking is ALLEX, a compliant humanoid platform developed by WIRobotics, a South Korean company founded in 2021 by four former Samsung engineers, which raised KRW 13 billion in Series A funding in March 2024. WIRobotics formed a strategic partnership with RLWRLD to develop ALLEX's intelligence; the platform is built around a 15-degree-of-freedom hand capable of detecting forces as small as 100 gf without tactile sensors, with 0.3mm fingertip repeatability and arms with more than ten times lower friction and rotational inertia than conventional collaborative robot arms.

Source: WIrobotics - ALLEX

Dexterous manipulation data requires a hardware platform with enough compliance and force resolution to capture what contact actually feels like, rather than approximating it through position control. WIRobotics also collaborates with MIT, UIUC, UMass, and KIST on research development alongside the RLWRLD partnership.

On ALLEX humanoid tasks, RLDX-1 achieved an 86.8% success rate against approximately 40% for Physical Intelligence's pi0.5 and NVIDIA's GR00T N1.6. In a physical hardware test on ALLEX, the model completed a pot-to-cup pouring task at 70.8%, approximately double what RLWRLD places comparable models at, which it puts in the high-30% range. RLWRLD also introduced DexBench, a proprietary benchmark developed and evaluated by RLWRLD across five manipulation domains

RLWRLD will hold a launch event called Dexterity Night in San Francisco on May 13 to announce additional hardware partnerships from the United States, Japan, and South Korea. The company has said its next development target is a 4D world model designed to simulate contact, torque, and robot state over time; extending RLDX-1 from a manipulation policy into a predictive physical simulation layer.

Have a robotics update Korthos should review? Send news, deployments, product releases, funding rounds, research, or media to tips@korthos.xyz or reach out on X at @agkorthos.

- ALLEXProduct

- WIRoboticsCompany

- RLWRLDCompany